So Long WhatsApp

For years, I refused to install WhatsApp messenger because I had customer contact details and other information on my phone.

Eventually, I made a concerted effort to clear all that out, with the side effect that I could then install WhatsApp, based in part on their promise that personal data - names, addresses, internet searches or location data - would not be collected, much less used.

When WhatsApp was acquired by Facebook, it was inevitable that that promise was going to get broken. Something all the more apparent when one of the WA founders left Facebook as a result of a disagreement about privacy.

So disappointing as it is, the recent news really was quite inevitable.

WhatsApp have pushed a notification of a change in their terms and conditions - the new changes allow them to share data with Facebook, including (but not limited to)

- User's phone numbers

- User's contact lists (see below)

- Profile information

- Status information

- "Diagnostic data" - what phone model you're using, what networks you're on etc

- Location data

- "User content"

- Details of purchases made with businesses using WhatsApp, including Financial Information

- "Usage data"

These changes will come into effect from 8 Feb 2021 - if you disagree with the changes, then the only recourse is to delete your account before then (which probably isn't GDPR compliant, but Facebook tend not to worry about that).

I registered for Facebook over a decade and a half ago, although I haven't used the account in anger for 5 years now.

I've written about the privacy issues with Facebook and other social media for years, but Facebook in particular has shown itself - through unwillingness to act - to be a danger to the very foundations of democracy.

If you haven't watched Carole Cadwalladr's TED talk (Facebook's role in Brexit - and the threat to democracy) I strongly recommend giving it a watch - it's just 15 minutes of your life.

In case you've made the mistake of thinking that's just one crazy cat-lady, a December 2018 report for the US Senate Intelligence Committee concluded that Social Media platforms pose a threat to democracy.

With COVID-19 raging, we're all too well aware of the anti-5G and anti-Mask conspiracies that gather followers Facebook groups.

Genocide

It's not just an issue in the Western World either

Facebook was implicated in the Rohingya Genocide in Myanmar, with a Facebook commissioned report noting that the company did little to try and figure out facts on the ground.

“There are a lot of people at Facebook who have known for a long time that the company should have done more to prevent the gross misuse of its platform in Myanmar - Matthew Smith, Fortify Rights

Unfortunately, Myanmar isn't even an isolated case - Facebook was used to facilitate violence in 2018 in the anti-Muslim riots in Sri Lanka.

Despite these tragic events, Facebook's upper echelons seem not to have learnt any lessons. In 2020, Mark Zuckerberg defended the decision not to suspend Steve Bannon from Facebook on the basis that he hadn't broken enough rules when he called for the beheading of two senior US Officials - FBI Director Christoper Wray, and Infectious Disease specialist Dr Anthony Fauci.

The events in the US yesterday show exactly why it's so dangerous for platforms to allow distribution of calls for violence to millions of users. Incitement to violence tend to result in exactly that.

Amongst other things, Facebook's platform is actively being used to spread

- Hate speech - racism, white supremacy etc

- misinformation - Anti-vaccer material, COVID misinformation

- Conspiracy theories - QAnon and others

- child exploitation material

- autism miracle cures (for example, giving vulnerable children bleach enemas)

- pro-anorexia groups

- livestreams of rapes, murders (including the infamous Christchurch massacre) and suicides

This content is actively pushed into users streams as Facebook's algorithms attempt to increase "engagement" (the most important metric as it translates into ad dollars). If you're perceived to have engaged with a type of content, the algorithms will push more and more, "confirming" the stunted world-view you're being sold - this phenomena is known as a social-media or filter bubble.

Facebook is a platform that was designed to be addictive, so it should come as no surprise that the content on there has a far-reaching impact in meat-space.

There's no other way of saying it: Facebook has become a pox upon societies globally.

A river of Data

Ultimately, the issues caused are driven by one underlying constant - the ever present need to monetise user's data by profiling it and selling advertising space. It's something Facebook is incredibly good at.

Giving data to Facebook only serves to feed that beast, perpetuating the harm.

What this means is that this isn't just an issue of individual privacy - even if you're OK with what they do with your data (and if Facebook were ever truly honest about it, you probably wouldn't be) - by feeding Facebook data you're helping to ensure the mechanisms that cause harm continue to exist and be refined.

Facebook tries to collect data in every way it possibly can, including

- Recording every action whilst on Facebook (obviously)

- Capturing information whenever you land on a page with either Facebook ads or a "Like" button - effectively recording your browsing history (even Pornhub had Like buttons at one point, and no Incognito mode may not necessarily have helped)

- Trying to acquire access to medical data from various US hospitals

- Profiling users behaviour in order to assess emotional state - Facebook later said there was an oversight (much like there was an "oversight" leading to the Cambridge Analytica stuff)

- The android app collected records of user's phone calls and SMS messages. The app, of course, is often preloaded onto phones and can't be removed

- Location data is collected pretty much any way they can get it

There are far too many "mistakes" to list (have a look at the table of contents of the Wikipedia page for Criticism of Facebook), needless to say that Facebook is, at heart, a massive data whore.

WhatsApp's ubiquity was built, in no small part, based on the promises it made with regard to use of data (and to some extent, because it wasn't Facebook messenger).

Now that almost everyone is on WhatsApp, there's a significant element of Vendor Lock In: it's hard to leave because whilst alternatives exist, that's of limited use if your contacts aren't also on that service.

My Data

I've written about Privacy online since before I even had a website.

Years ago I acted to stymie as many of the data flows as possible - all Facebook (actually, all social media) trackers and beacons are blocked, and my Facebook account sits all but abandoned. My FB account itself is only maintained so that I can periodically pull data down, see what's been collected, figure out where from and move to close that off too.

WhatsApp is a useful tool, one that many of my contacts use, almost indispensable in fact.

However, I cannot in good conscience feed into the machine that is Facebook, it's not just about the data they'll collect on me, but about what the outcome of letting them collect that data is.

There'll be no impact on Facebook, in fact, they won't even notice but I'll be continuing to avoid playing a part in perpetuating their cycle of lies and harm.

So, unless there's a sudden change of direction by WhatsApp (seems unlikely) I'll no longer be reachable via WhatsApp as of 7 Feb 2021. I will continue to be reachable on Signal and Telegram as well as via more traditional means.

Contact lists

One of the things that WhatsApp intend to share is Contact lists - i.e. details of each of the Contacts in your phone. This, unfortunately is quite common, many apps hoover up contact lists.

It probably isn't, but should be illegal. When Facebook take data on you, they're (hopefully) doing it with your consent. However, when they take your contact list, they're taking other people's data - essentially having you consent on behalf of someone else.

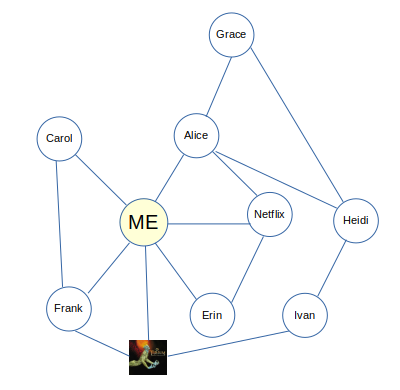

Contact Lists are fed into Facebook's social graph - building an association web to show who knows who (things like message histories are then used to add who interacts regularly with who):

In the graph above, it's quite plausible be that neither Heidi or Carol have actually directly interacted with our system, and instead were in a contact list imported by one of the other's they're linked to. You can learn more about what the Social Graph is in this article.

In 2010 I wrote a post detailing how this social graph could be used to identify distributors of copyrighted material. That was 11 years ago, and the granularity of the graph has only increased since.

Another major change since then, is that some parts of that graph are now accessible to third parties via Facebook's API, so it's no longer just Facebook who could easily draw these correlations.

It was this functionality, in part, which allowed Cambridge Analytica to collect the data they did in order to try and influence elections. Update: A little later into 2021 it's also been revealed that it's their "find my friends" functionality at the root of Facebook's 2019 data breach affecting the phone numbers of 500 million users.

The reality, of course, is that I'm shouting into the wind: the door's wide open and companies are never going to stop collecting contact lists, but just stop and think for a moment about how easily we've come to take it for granted that they'll hoover other peoples information off our phones.

Update: 7 Feb 2021

Today is the day of my scheduled deletion. In the meantime, WhatsApp have pushed the T&C change back to May in a belated attempt at damage control. What they haven't done, unfortunately, is do much to improve their explanation of what the change will actually mean. Instead, they seem to have added some overly specific denials which cover things that most people weren't concerned about anyway.

I've also had a reply to my GDPR complaint, with an unsurprising conclusion, but a similarly specific denial:

We've reviewed your objection and we've found that to the extent the processing you're objecting to relies on the relevant legal basis for an objection under the GDPR, we have compelling legitimate grounds for this processing.

Please note that you have the right to contact the Irish Data Protection Commission, which is WhatsApp's lead supervisory authority (please see www.dataprotection.ie). You also have the right to contact your local data protection authority and to bring a claim before the courts

Your questions about our terms and privacy policy update are important to us. The terms and privacy policy update don’t affect the privacy of your personal messages with friends or family in any way. Neither WhatsApp or Facebook can read or listen to your personal messages or calls as they’ll always be end-to-end encrypted.

You’ll have until May 15 to review the new terms, and you’ll need to accept them by then to continue using WhatsApp. We recommend that you review the updates at your own pace to help you make a decision.

The privacy of message content was never really in doubt, being E2E encrypted. However, what is (and remains in doubt, given the reply doesn't cover it) is the privacy of

- Message metadata: Who was the message sent to? When was it sent? Who do I frequently message? What groups am I in? etc

- Location data

- Device specific data - useful in fingerprinting for use in analysis elsewhere (including in web-trackers)

- Contact lists

I had also hoped that WhatsApp might link to a published Privacy Impact Assessment, or perhaps go into a bit more depth on what they're changing, and why. It's a very boiler plate (and not at all reassuring) reply.

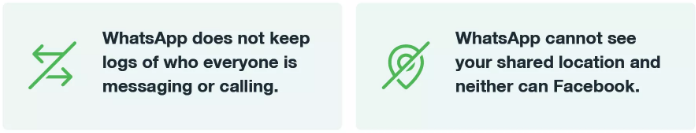

In each update pushed out, Whatsapp seem to focus on "Whats not changing" (we can't see your messages) rather than writing about what exactly is changing and why. Where they do address certain concerns, the language used is odd. For example

There's a difference between my shared location (i.e. I can turn that on and off), and the app's ability to collect my location. Why have the word "shared" in there (it's not like it's simplifying the sentence further)? Similarly, you don't need to keep logs of exactly who is being called/messaged in order to collect data for use in that.

Although this may seem overly specific/paranoid, over the years, FaceBook has shown that it is not a worthy beneficiary of "benefit of the doubt", and it's incredibly difficult to put privacy back into the bottle once uncorked.

Either way, it's largely moot now. Even if the T&C change was innocent, through the ineptness of it's delivery, WhatsApp have managed to drive Signal to sufficient critical mass amongst my contacts that I can reasonably easily switch away from WhatsApp entirely.