Cynet 360 Uses Insecure Control Channels

For reasons I won't go into here, recently I was taking a quick look over the "Cynet 360" agent, essentialy an endpoint protection mechanism used as part of Cynet's "Autonomous Breach protection Platform".

Cynet 360 bills itself as "a comprehensive advanced threat detection & response cybersecurity solution for for [sic] today's multi-faceted cyber battlefield".

Which is all well and good, but what I was interested in was whether it could potentially weaken the security posture of whatever system it was installed on.

I'm a Linux bod, so the only bit I was interested in, or looked at, was the Linux server installer.

I ran the experiment in a VM which is essentially a clone of my desktop (minus things like access to real data etc).

Where you see [my_token] or (later) [new_token] in this post, there's actually a 32 byte alphanumeric token. [sync_auth_token] is a 88 byte token (it actually looks to be a hex encoded representation of a base64'd binary string)

The Installer

The installer is delivered as nested a zip files, the outer zip containing more zips with each of the OS specific installers inside

ben@milleniumfalcon:/tmp/cynet$ unzip CynetEPSInstaller.zip Archive: CynetEPSInstaller.zip inflating: CynetMSI.msi inflating: CynetScanner_[my_token].exe inflating: LINUX_CynetEPSInstaller.zip inflating: MAC_CynetEPSInstaller.zip inflating: README.pdf ben@milleniumfalcon:/tmp/cynet$ unzip LINUX_CynetEPSInstaller.zip Archive: LINUX_CynetEPSInstaller.zip inflating: cy_runner.sh inflating: cyservice inflating: CynetEPS inflating: DefaultEpsConfig.ini

The "installer" itself is cy_runner.sh. Looking in it, it's raises a few concerns about quality of the code we're actually installing, but otherwise isn't too alarming.

It does look like, in a previous version, they created unit files for systemd and are now switching back as there's a section which disables any Cynet unit files and installs a script into /etc/init.d instead.

Config

Configuration appears to initially take place in DefaultEpsConfig.ini. However, despite the extension, it's a binary blob so there's no real way to tell what's being set/what it does.

Endpoint protection systems generally need elevated privileges to do their job, and Cynet 360 is no exception. Elevated privileges though, require a lot of trust, and not being able to do something simple like audit config doesn't really do much to engender trust.

Running

I decided to give it a run to see what it did. The first run was a direct call to the executable without any arguments, resulting in a simple usage warning

Usage: /tmp/cynet/CynetEPS []...

Although it did also create a new (but uninteresting) file

ben@milleniumfalcon:/tmp/cynet$ cat Data/EPSDataPlist.xml <?xml version="1.0" encoding="utf-8"?> <plist><OrigArgList>/tmp/cynet/CynetEPS</OrigArgList></plist> </pre>

A quick look in the installer script gives us the correct arguments to use

cynetargs="slb.cynet.com -port 443 -lightagent -cs -msi -tknv 1 -tkn [my_token] -nosp"

Where [my_token] seems to be a user-specific auth-token. Presumably that's why the installer is delivered as nested zips, because they're building it "on demand" with the auth details already baked in.

We've got a FQDN in those args though - slb.cynet.com - so I figured I'd have a very quick reccy of it. What I found immediately is that it uses a self-signed certificate

ben@milleniumfalcon:/tmp/cynet$ openssl s_client -connect slb.cynet.com:443 -servername slb.cynet.com

CONNECTED(00000003)

depth=0 CN = Cynet

verify error:num=18:self signed certificate

verify return:1

depth=0 CN = Cynet

verify return:1

---

Certificate chain

0 s:/CN=Cynet

i:/CN=Cynet

---

Server certificate

-----BEGIN CERTIFICATE-----

MIIC8zCCAdugAwIBAgIJAJLcjGl6FrmyMA0GCSqGSIb3DQEBCwUAMBAxDjAMBgNV

BAMMBUN5bmV0MB4XDTE1MDUwNzA5MTU0NVoXDTI1MDUwNDA5MTU0NVowEDEOMAwG

A1UEAwwFQ3luZXQwggEiMA0GCSqGSIb3DQEBAQUAA4IBDwAwggEKAoIBAQC/IBdg

ieABA63ud98TcYw/f0nqAt3VTYAfDPZO9gzzC8pABFPayZLaH6oC1iPERaHBHc9t

mTMoJeCScMCJ6sEWrtjj+xoKslCF0KBF+EeYBF4yOZPp/5hhjhrJgXBd3zVyF/iS

RaW95012bC3AxospRakbuD3fTL4tgQGSOnEzS3/ESVZ3YDn1P0EASSa+HE/TBGt1

nioLM4UtSPx0kvW8P97h74csyLsyfpUOklV28lONMGrgYAl2eLJcbXBwcj49WcD7

cSH1DcmLxziNN7fSEceS+6GjPNSC2OHTf5B0akyQ0hSiVlZEP41HyCYWekZaJcZ4

IEVAfjSYM2+ZcQttAgMBAAGjUDBOMB0GA1UdDgQWBBSS2GIpgJhdc1qt8QUlZ+5m

Pd3RbzAfBgNVHSMEGDAWgBSS2GIpgJhdc1qt8QUlZ+5mPd3RbzAMBgNVHRMEBTAD

AQH/MA0GCSqGSIb3DQEBCwUAA4IBAQB43itg5nzAXyZxxAuFCZ53Osfjy9zMwW7n

Ij3k7AN2amt0lwkwOSvJ7UUqH9M0c8qNpoL49WZjm7F4MmlLaoJKspOnZFqHyz8V

XmyfaEQrU6Xq7g8ah9dY0CXtjj/VojwR3bYMTdfFqKpEv6wV8i+8Q6iL76A+PBoQ

tHnenZddky09HB9KHulrGNRpZ07/BtBTKT6S5IONIJ1IGFUMYuUnyV6+bhQ3gHrs

SH4DqdFpfZNOukpw/MBplLWBgdgYcaWbpLeWN4AludWaiypc93CB1UK4DZgQTFnz

GaFTUvJvvOaZgIl67Nt2dMRhLCLeo1Kn0xWe4iTRm3CxqHtc/BwR

-----END CERTIFICATE-----

subject=/CN=Cynet

issuer=/CN=Cynet

---

No client certificate CA names sent

Peer signing digest: SHA1

Server Temp Key: ECDH, P-256, 256 bits

---

SSL handshake has read 1303 bytes and written 497 bytes

Verification error: self signed certificate

---

New, TLSv1.2, Cipher is ECDHE-RSA-AES256-SHA384

Server public key is 2048 bit

Secure Renegotiation IS supported

Compression: NONE

Expansion: NONE

No ALPN negotiated

SSL-Session:

Protocol : TLSv1.2

Cipher : ECDHE-RSA-AES256-SHA384

Session-ID: 55420000264DC69AC6121B7F6BCCB32503646A0644D28724C2B8BE225E23F134

Session-ID-ctx:

Master-Key: B5FA2818FA11882831EC75A2F8B75B55FEBC2622F4366979A98A6F3F33FF91B28727918E70EBD7A280ECF8EDC1439F8D

PSK identity: None

PSK identity hint: None

SRP username: None

Start Time: 1586256465

Timeout : 7200 (sec)

Verify return code: 18 (self signed certificate)

Extended master secret: yes

---

That's an odd thing for a security company to do.

Quite aside from being self-signed, the certificate doesn't even purport to be for the name we're connecting to (there are no SANs either, so the name's not lurking in there).

Maybe their agent is configured to only trust that specific certificate?

So, I configured an Nginx stream's instance to use a snakeoil cert and proxy onward to their service

stream {

limit_conn_zone $binary_remote_addr zone=ip_addr:30m;

server {

listen *:443 ssl;

proxy_pass 52.30.179.230:443;

proxy_connect_timeout 1s;

preread_timeout 2s;

limit_conn ip_addr 10;

}

ssl_certificate /etc/ssl/certs/ssl-cert-snakeoil.pem;

ssl_certificate_key /etc/ssl/private/ssl-cert-snakeoil.key;

ssl_session_timeout 1d;

ssl_session_tickets off;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_prefer_server_ciphers on;

log_format str '$remote_addr\t-\t-\t[$time_local]\t$ssl_protocol\t'

'$ssl_session_reused\t$ssl_cipher\t$ssl_server_name\t$status\t'

'$bytes_sent\t$bytes_received';

access_log /var/log/nginx/slb.log str;

}

A quick edit of /etc/hosts ensure the client would connect to Nginx. If the client only trusts a specific certificate, then when started up, it should sever comms on receipt of a different cert, and hopefully also scream blue murder.

I ran a capture, and started the client

root@milleniumfalcon:/etc/nginx# tcpdump -i lo host 127.0.0.1 and port 443 tcpdump: verbose output suppressed, use -v or -vv for full protocol decode listening on lo, link-type EN10MB (Ethernet), capture size 262144 bytes 12:01:29.322624 IP slb.cynet.com.47926 > slb.cynet.com.https: Flags [F.], seq 2623797456, ack 2457483027, win 1365, options [nop,nop,TS val 752136306 ecr 752129396], length 0 12:01:29.322746 IP slb.cynet.com.https > slb.cynet.com.47926: Flags [F.], seq 1, ack 1, win 359, options [nop,nop,TS val 752136306 ecr 752136306], length 0 12:01:29.322755 IP slb.cynet.com.47926 > slb.cynet.com.https: Flags [.], ack 2, win 1365, options [nop,nop,TS val 752136306 ecr 752136306], length 0 12:01:33.301097 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [S], seq 4159323436, win 43690, options [mss 65495,sackOK,TS val 752137300 ecr 0,nop,wscale 7], length 0 12:01:33.301108 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [S.], seq 1722966319, ack 4159323437, win 43690, options [mss 65495,sackOK,TS val 752137300 ecr 752137300,nop,wscale 7], length 0 12:01:33.301117 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [.], ack 1, win 342, options [nop,nop,TS val 752137300 ecr 752137300], length 0 12:01:33.301136 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [P.], seq 1:312, ack 1, win 342, options [nop,nop,TS val 752137300 ecr 752137300], length 311 12:01:33.301140 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [.], ack 312, win 350, options [nop,nop,TS val 752137300 ecr 752137300], length 0 12:01:33.303420 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [P.], seq 1:1184, ack 312, win 350, options [nop,nop,TS val 752137301 ecr 752137300], length 1183 12:01:33.303483 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [.], ack 1184, win 1365, options [nop,nop,TS val 752137301 ecr 752137301], length 0 12:01:33.303941 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [P.], seq 312:438, ack 1184, win 1365, options [nop,nop,TS val 752137301 ecr 752137301], length 126 12:01:33.304284 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [P.], seq 1184:1235, ack 438, win 350, options [nop,nop,TS val 752137301 ecr 752137301], length 51 12:01:33.304392 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [P.], seq 438:471, ack 1235, win 1365, options [nop,nop,TS val 752137301 ecr 752137301], length 33 12:01:33.342396 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [.], ack 471, win 350, options [nop,nop,TS val 752137311 ecr 752137301], length 0 12:01:33.342418 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [P.], seq 471:750, ack 1235, win 1365, options [nop,nop,TS val 752137311 ecr 752137311], length 279 12:01:33.342424 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [.], ack 750, win 359, options [nop,nop,TS val 752137311 ecr 752137311], length 0 12:01:33.804739 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [S], seq 2366777137, win 43690, options [mss 65495,sackOK,TS val 752137426 ecr 0,nop,wscale 7], length 0 12:01:33.804747 IP slb.cynet.com.https > slb.cynet.com.47948: Flags [S.], seq 2218924967, ack 2366777138, win 43690, options [mss 65495,sackOK,TS val 752137426 ecr 752137426,nop,wscale 7], length 0 12:01:33.804757 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [.], ack 1, win 342, options [nop,nop,TS val 752137426 ecr 752137426], length 0 12:01:33.807094 IP slb.cynet.com.https > slb.cynet.com.47948: Flags [P.], seq 1:1184, ack 312, win 350, options [nop,nop,TS val 752137427 ecr 752137426], length 1183 12:01:33.807185 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [.], ack 1184, win 1365, options [nop,nop,TS val 752137427 ecr 752137427], length 0 12:01:33.807706 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [P.], seq 312:438, ack 1184, win 1365, options [nop,nop,TS val 752137427 ecr 752137427], length 126 12:01:33.807967 IP slb.cynet.com.https > slb.cynet.com.47948: Flags [P.], seq 1184:1235, ack 438, win 350, options [nop,nop,TS val 752137427 ecr 752137427], length 51 12:01:33.808066 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [P.], seq 438:471, ack 1235, win 1365, options [nop,nop,TS val 752137427 ecr 752137427], length 33 12:01:33.809564 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [FP.], seq 471:19204, ack 1235, win 1365, options [nop,nop,TS val 752137427 ecr 752137427], length 18733 12:01:33.809576 IP slb.cynet.com.https > slb.cynet.com.47948: Flags [.], ack 19205, win 1373, options [nop,nop,TS val 752137427 ecr 752137427], length 0 12:01:33.827478 IP slb.cynet.com.https > slb.cynet.com.47948: Flags [F.], seq 1235, ack 19205, win 1373, options [nop,nop,TS val 752137432 ecr 752137427], length 0 12:01:33.827488 IP slb.cynet.com.47948 > slb.cynet.com.https: Flags [.], ack 1236, win 1365, options [nop,nop,TS val 752137432 ecr 752137432], length 0 12:01:38.450419 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [F.], seq 750, ack 1235, win 1365, options [nop,nop,TS val 752138588 ecr 752137311], length 0 12:01:38.450577 IP slb.cynet.com.https > slb.cynet.com.47942: Flags [F.], seq 1235, ack 751, win 359, options [nop,nop,TS val 752138588 ecr 752138588], length 0 12:01:38.450586 IP slb.cynet.com.47942 > slb.cynet.com.https: Flags [.], ack 1236, win 1365, options [nop,nop,TS val 752138588 ecr 752138588], length 0

Oh dear.

You may be wondering why I chose to use Nginx's stream rather than http here? I actually originally stood up a HTTPS server, and have skipped that step here for brevity's sake - the traffic generated on port 443 is not HTTPS traffic, but instead appears to be some kind of binary control traffic (I didn't delve too far in this respect as this was only a quick look).

Presumably Port 443 was selected due to it having an extremely high chance of being allowed out of egress firewalls.

The Sync Module

During the run above, the client started to configure itself, and wrote some new config out

ben@milleniumfalcon:/tmp/cynet$ cat Data/EPSDataPlist.xml <?xml version="1.0" encoding="utf-8"?> <plist><OrigArgList>./CynetEPS slb.cynet.com -port 443 -lightagent -cs -msi -tknv 1 -tkn cde7ab18-6a22-4850-8179-f51b222987d3 -nosp</OrigArgList><ServerArgList>127.0.0.1 -port 2477 -cpulimit 15 -lightagent -secip slb.cynet.com -secport 443 -adtdisable -syncmodulesintervalmin 30 -syncmodulesurl https://sav.cynet.com:443/api/EpsSyncDirectory?filePath=EPS_MODULES/idx.json -synctoken [sync_auth_token] -tknv 1 -tkn [new_token] -nosp </ServerArgList></plist>

This is where we first see [new_token], having presumably obtained it from their C&C.

So, rather than messing about with what seemed to be a binary protocol going to slb.cynet.com, I thought I'd have a look at the much more interesting sounding syncmodulesurl using sav.cynet.com

First thing to do then was to stand up a lazy Nginx man in the middle

server {

listen 80;

root /usr/share/nginx/empty;

index index.php index.html index.htm;

server_name sav.cynet.com;

location / {

proxy_set_header Host "sav.cynet.com";

proxy_pass https://sav.cynet.com;

}

}

server {

listen 443;

root /usr/share/nginx/empty;

index index.php index.html index.htm;

server_name sav.cynet.com;

ssl on;

ssl_certificate /etc/pki/tls/certs/snakeoil.crt;

ssl_certificate_key /etc/pki/tls/private/snakeoil.key;

ssl_session_timeout 5m;

location / {

proxy_set_header Host "sav.cynet.com";

proxy_pass http://127.1.1.1;

}

}

The flow for this is quite simple:

- Terminate the SSL connection and accept the request

- Proxy as plain HTTP over loopback

- Proxy on as HTTPS to the original service

Meaning we can trivially run a packet capture and look at what's going on.

So, a simple packet capture

tcpdump -i lo -v -s0 -w slb.pcap host 127.1.1.1

and then triggering the agent

sudo ./CynetEPS slb.cynet.com -port 443 -lightagent -cs -msi -tknv 1 -tkn [my_token] -nosp

And we quickly start seeing requests in Nginx's logs

192.168.1.70 - - [07/Apr/2020:12:10:34 +0100] "GET /api/EpsSyncDirectory?filePath=EPS_MODULES/idx.json HTTP/1.1" 200 2666 "-" "-" "-" "sav.cynet.com" CACHE_-

That we got a request in the first place tell us that the client didn't care that the cert we passed it was invalid for the requested name (I did also try configuring to pass a publicly trusted cert valid for a completely different name, just in case they were doing something arse-backwards like checking for a self-signed cert, but it was still accepted)

So, the next thing to do is to look in the capture and see what's going on

GET /api/EpsSyncDirectory?filePath=EPS_MODULES/idx.json HTTP/1.0

Host: sav.cynet.com

Connection: close

Accept: */*

Accept-Encoding: deflate

syncAuthToken: [sync_auth_token]

tkn: [new_token]

tknv: 1

HTTP/1.1 200 OK

Server: nginx/1.14.2

Date: Tue, 07 Apr 2020 11:10:34 GMT

Content-Type: application/json

Content-Length: 2666

Connection: close

Cache-Control: no-cache

Pragma: no-cache

Expires: -1

Content-Disposition: attachment; filename=idx.json

X-AspNet-Version: 4.0.30319

X-Powered-By: ASP.NET

{"filesMeta":[{"relativePath":"ALERTS_CONFIG\\AlertsConfiguration.json.gz","fileMd5":"B94E58129F5B6009612FEC51246B73E8","compressedFileMd5":"83224EC38D1C480FF3606AEDBBD98488","compressedFileSize":3111},{"relativePath":"AV\\Linux\\avupdate.bin.gz","fileMd5":"9B668FD98826F927C2FE2427359B574B","compressedFileMd5":"6EBDD102B2D3C05E449573FE38219DEC","compressedFileSize":1605304},{"relativePath":"AV\\Linux\\avupdate_msg.avr.gz","fileMd5":"EC84834FCE71ED8CFACB1E4AFC5C67AD","compressedFileMd5":"2E3B962B8DF98B279864B10E0F4907A7","compressedFileSize":6513},{"relativePath":"AV\\Linux\\libiconv.so.2.gz","fileMd5":"EA62BB37D1A936A5AE419372495126B1","compressedFileMd5":"04AFFF2BFC1649A57B37287139BD3EB2","compressedFileSize":660651},{"relativePath":"AV\\Linux\\libscew.so.1.gz","fileMd5":"AB5A356D4968CF3F4C62E9B649388DFA","compressedFileMd5":"A352FB22828EDA28189F8EFA0E0753DC","compressedFileSize":78494},{"relativePath":"AV\\MAC\\avupdate.bin.gz","fileMd5":"066EB023F08107B1E552D100777F680E","compressedFileMd5":"7EFDA177D863C8F599F214281C14984C","compressedFileSize":1470525},{"relativePath":"AV\\MAC\\avupdate_msg.avr.gz","fileMd5":"DD462A2CBBB38101DE1E9B8DBFB4211D","compressedFileMd5":"0F411DF49692CCB42DF97E1DBD7CF365","compressedFileSize":6462},{"relativePath":"AV\\MAC\\libiconv.2.dylib.gz","fileMd5":"AF3CC4DF8F8B96CE8163859BBFC7169C","compressedFileMd5":"E0ED1F1E9EE5790569AA1F0E5E36591C","compressedFileSize":675620},{"relativePath":"AV\\MAC\\libscew.1.dylib.gz","fileMd5":"9B8D0C5E2D5FCC4F6B770A72E1429886","compressedFileMd5":"5A583FFAE2065D07AD89AA1DF0EBD2A2","compressedFileSize":75179},{"relativePath":"Config\\cynetepsconfig.ini.gz","fileMd5":"7B0EF5CCD71B8F89173D9757ED91697B","compressedFileMd5":"89EC405DAC1E4C3838322B0C3CBC12FB","compressedFileSize":1009840},{"relativePath":"Config\\Linux\\CynetEpsConfig.ini.gz","fileMd5":"273D5991D7B45A9B68019E8DEAC7D694","compressedFileMd5":"03C5878B06D809CA1F07FB42DCBC5022","compressedFileSize":1087},{"relativePath":"Config\\Mac\\CynetEpsConfig.ini.gz","fileMd5":"12538CF4C88BC2B9546D8745831898E5","compressedFileMd5":"F8AB227AEAD8712AE629AF77F5083E23","compressedFileSize":671},{"relativePath":"CyAi\\V1\\model.txt.gz","fileMd5":"8EC56EC8969029672EAF964769570920","compressedFileMd5":"95E27BBD3E86657243D376E331FE79F0","compressedFileSize":4712719},{"relativePath":"CyAi\\V1\\version.txt.gz","fileMd5":"063ACAD8328865EB39C93FFF22FE4820","compressedFileMd5":"B96BB0F331186F5AF26844388B8661E5","compressedFileSize":30},{"relativePath":"VBA\\V1\\vba.exe.gz","fileMd5":"56598AFFABF3268EFDE49AC872FB2763","compressedFileMd5":"D7D0CEDFA5D026502A0F3F693C4EB3A9","compressedFileSize":94886}],"timestamp":1584901038}

So, we can see that it enumerates a bunch of OS specific config files and what appear to be gzipped binaries.

Although the response gives checksums, there isn't a cryptographic signature in sight. Although, even if there were, including them in this JSON response would give no benefit as at this point we (the attacker) can change the response to be whatever we want, including injecting our own signatures.

After a little bit of experimentation to figure out how the paths were constructed, I managed to request one of the files using curl

ben@milleniumfalcon:/tmp/cynet$ curl -k -v https://sav.cynet.com:443/api/EpsSyncDirectory?filePath=EPS_MODULES/ALERTS_CONFIG/AlertsConfiguration.json.gz -H "syncAuthToken: [sync_auth_token]" -H "tkn: [new_token]" -H "tknv: 1" -o /dev/null

% Total % Received % Xferd Average Speed Time Time Time Current

Dload Upload Total Spent Left Speed

0 0 0 0 0 0 0 0 --:--:-- --:--:-- --:--:-- 0* Trying 192.168.1.5...

* Connected to sav.cynet.com (192.168.1.5) port 443 (#0)

* found 148 certificates in /etc/ssl/certs/ca-certificates.crt

* found 603 certificates in /etc/ssl/certs

* ALPN, offering http/1.1

* SSL connection using TLS1.2 / ECDHE_RSA_AES_256_GCM_SHA384

* server certificate verification SKIPPED

* server certificate status verification SKIPPED

* common name: extbooks.bentasker.co.uk (does not match 'sav.cynet.com')

* server certificate expiration date FAILED

* server certificate activation date OK

* certificate public key: RSA

* certificate version: #3

* subject: C=UK,ST=Suff,L=Ips,O=BTasker,OU=BTasker,CN=extbooks.bentasker.co.uk,name=BTasker,EMAIL=mail@host.domain

* start date: Mon, 01 May 2017 13:40:08 GMT

* expire date: Wed, 01 May 2019 13:40:08 GMT

* issuer: C=UK,ST=Suff,L=Ips,O=BTasker,OU=BTasker,CN=BTasker,name=BTasker,EMAIL=mail@host.domain

* compression: NULL

* ALPN, server accepted to use http/1.1

> GET /api/EpsSyncDirectory?filePath=EPS_MODULES/ALERTS_CONFIG/AlertsConfiguration.json.gz HTTP/1.1

> Host: sav.cynet.com

> User-Agent: curl/7.47.0

> Accept: */*

> syncAuthToken: [sync_auth_token]

> tkn: [new_token]

> tknv: 1

>

< HTTP/1.1 200 OK

< Server: nginx/1.14.2

< Date: Tue, 07 Apr 2020 11:27:55 GMT

< Content-Type: application/x-gzip

< Content-Length: 3111

< Connection: keep-alive

< Cache-Control: no-cache

< Pragma: no-cache

< Expires: -1

< Content-Disposition: attachment; filename=AlertsConfiguration.json.gz

< X-AspNet-Version: 4.0.30319

< X-Powered-By: ASP.NET

<

{ [3111 bytes data]

100 3111 100 3111 0 0 12647 0 --:--:-- --:--:-- --:--:-- 12697

* Connection #0 to host sav.cynet.com left intact

The next question then was whether the binary looking things were actually executable binaries in their own right, or whether they were perhaps some form of pluggable module (which would raise the bar, although only by a tiny amount)

ben@milleniumfalcon:/tmp/cynet$ curl -k -v https://sav.cynet.com:443/api/EpsSyncDirectory?filePath=EPS_MODULES/AV/Linux/avupdate.bin.gz -H "syncAuthToken: [sync_auth_token]" -H "tkn: [new_token]" -H "tknv: 1" -o foo.gz ben@milleniumfalcon:/tmp/cynet$ gunzip foo.gz ben@milleniumfalcon:/tmp/cynet$ file foo foo: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), dynamically linked, interpreter /lib64/ld-linux-x86-64.so.2, for GNU/Linux 2.6.4, stripped

It's a standard executable, although running it gave me a missing library (and I wasn't particularly inclined to go further down the rabbit hole)

ben@milleniumfalcon:/tmp/cynet$ file foo foo: ELF 64-bit LSB executable, x86-64, version 1 (SYSV), dynamically linked, interpreter /lib64/ld-linux-x86-64.so.2, for GNU/Linux 2.6.4, stripped ben@milleniumfalcon:/tmp/cynet$ chmod +x foo ben@milleniumfalcon:/tmp/cynet$ ./foo ./foo: error while loading shared libraries: libscew.so.1: cannot open shared object file: No such file or directory

I did a bit of hunting around with filenames, as well as running commands against the Cynet agent, in the hopes of finding trace of anything which would suggest they were signing this code, but found nothing.

Putting it all together

So, we've a few bits of information now that we can put together to formulate an attack:

- The Cynet 360 Agent doesn't validate certificates when connecting back to Command & Control

- The Cynet 360 Agent doesn't validate certificates when fetching modules

- The Cynet 360 Agent runs with elevated privileges and executes potentially untrusted code once it's fetched it

So, our attack methodology is - in many ways - pretty straightforward

- Intercept or otherwise poison DNS for a victim system so that sav.cynet.com resolves to an adversary controlled server (or, otherwise be on the network path in such a way as to be able to redirect packets) with a HTTPS server listening on Port 443

- Proxy the majority of requests onto Cynet's servers

- Have the MiTM server return a malicious payload for a given file (e.g.

AV\\Linux\\avupdate.bin.gz) and overwrite MD5's in the main listing

The malicious payload will be executed with elevated privileges by the Cynet 360 endpoint agent and so can be used to establish a more permanent foothold (by, for example, installing a backdoor).

Command and Control

I noted earlier that I didn't delve too far into the traffic on their main C&C connection - to slb.cynet.com.

However, whilst I didn't want to spend too much time tinkering with it, I think it is important to highlight just what a risk it too poses, so let's circle back around to it briefly.

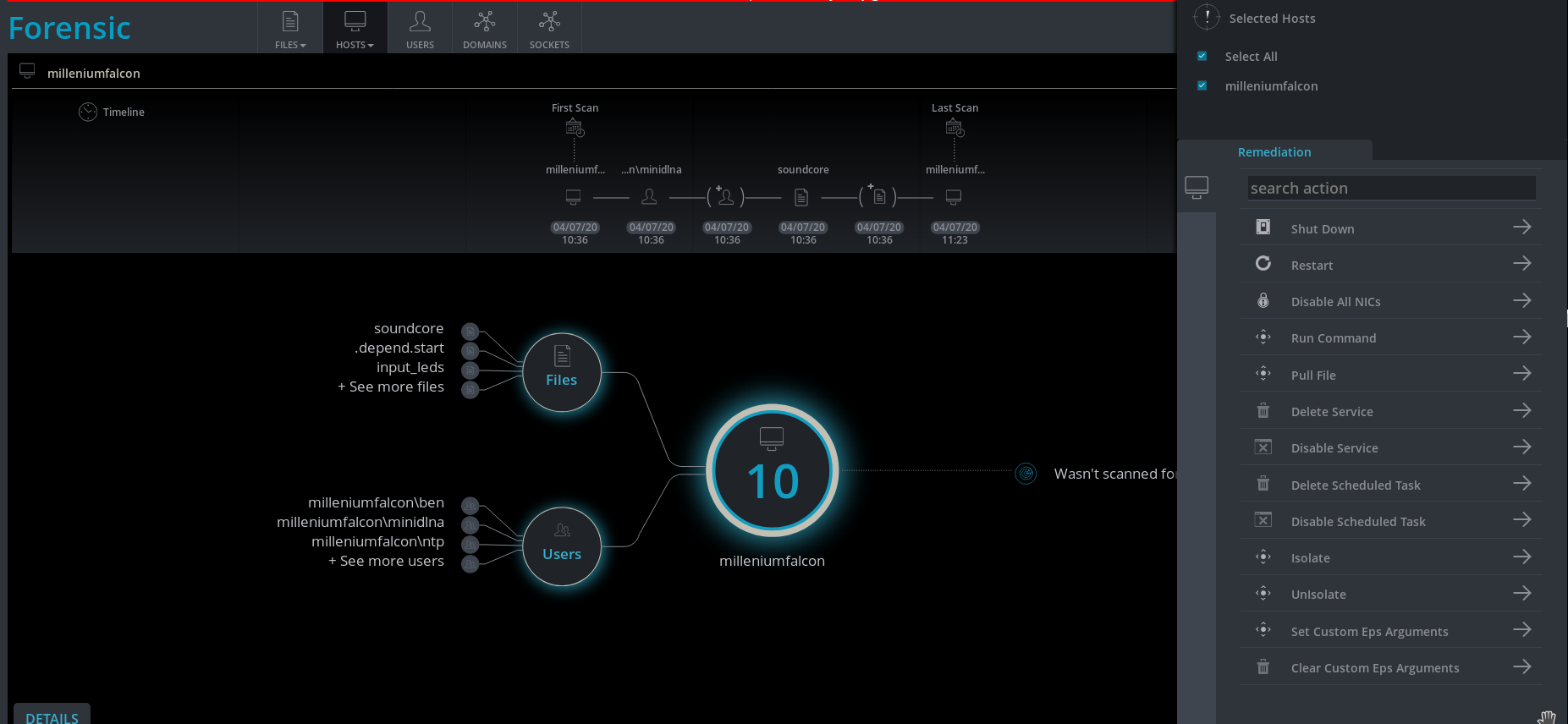

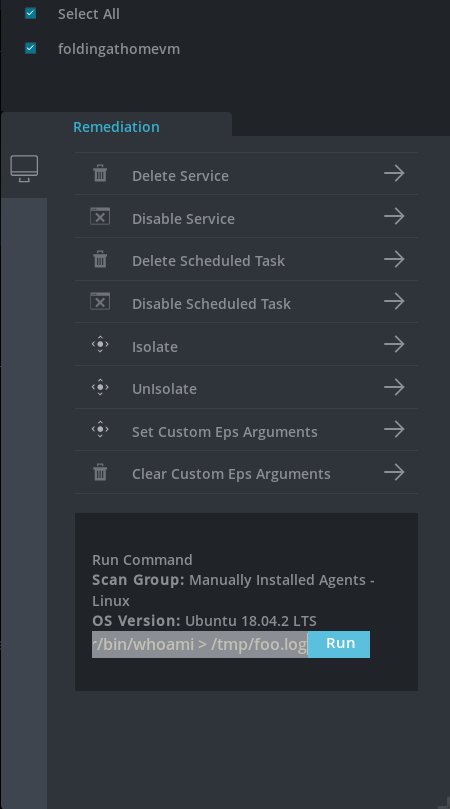

Cynet 360 may be intended as endpoint monitoring, but to a certain extent it incorporates endpoint management functionality, in the sense that you can actively push commands out to endpoints (I used a different VM to test this)

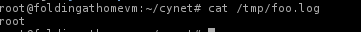

Which results in

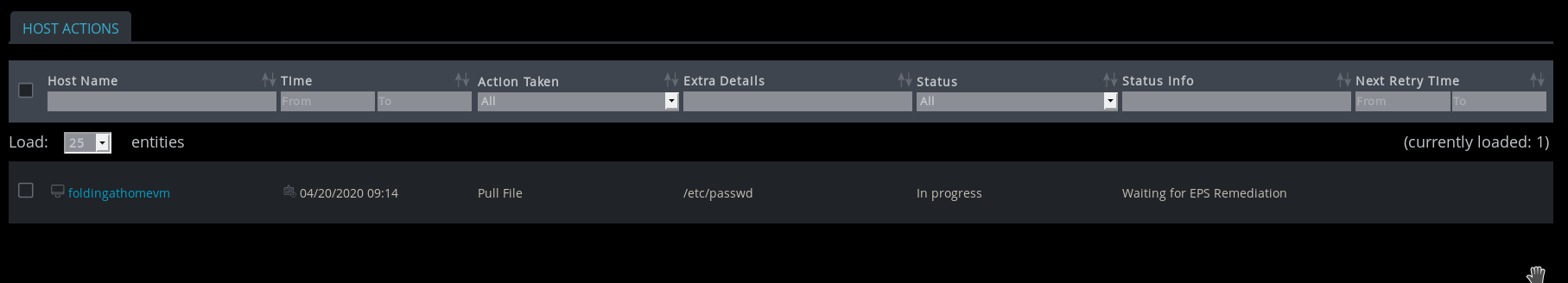

Although you could obviously do this with a custom command anyway, their dashboard will also let you exfiltrate arbitrary files

No logs of these actions are generated on the endpoint. Records of that only appear to exist within the centralised dashboard itself.

So, as we can see, the agent exposes extremely privileged access - it can send files "on demand" and will also run whatever command the dashboard user wants.

Whilst this level of access is arguably desirable in an endpoint management system, it can become extremely problematic when the control channel being used to communicate those commands is unverified/unauthorised.

An adversary willing to look more into the protocol they're using when communicating over this channel could inject arbitrary commands and have them run as root, or simply retrieve files which might be useful to them in other ways (for example retrieving SSL private keys so that traffic that the target box is serving can be MiTM'd).

None of their actions would be logged on the targeted box, and because the request never actually came via "real" C&C it also wouldn't appear in the activity histories there - the adversary essentially has root access without the need to do any log tampering to cover their tracks.

The issues we explored earlier with sav.cynet.com would allow an adversary to passively compromise Cynet 360 users, whilst the issues with the control channel (via slb.cynet.com) allow an adversary to take a much, much more active role.

Avoidance

Both of these issues could easily be avoided through either of 2 mechanisms (though, ideally, both should be used)

- Connections back to C&C should always require (and validate) a trusted certificate valid for the name being connected back to

- The agent should have a public key embedded into it, with the corresponding private key used to sign modules. The client should refuse to run any module it's fetched which is improperly signed. Signing C&C requests in this manner would also be beneficial, though care would need to be taken for this as this'd mean hosting live key matter on C&C.

Additional

Whilst I was writing an email to Cynet to alert them to this issue, I ran a few additional commands just to verify/confirm things.

When running strings against their origin avupdate.bin I noticed something that's potentially slightly concerning

<FIB_EXECUTABLE> FNAME=avupdate.bin VERSION=2.5.0.88 REVISIONTAG=HEAD DATE=2019-07-31 TIME=15:24 HOST=lx-amd64-gc24 PLATFORM=linux_glibc24_x86_64 DISTRIBUTION=suse-10 SYSTEM=Linux-2.6.16.60-0.54.5-default PROCESSOR=x86_64 LIBC=glibc-2.4 (20090904) LIBDEPS= </FIB_EXECUTABLE>

This seems to imply that the executable was built on a SUSE 10 box - SUSE 10 went End of Life in 2013 (and security updates ended in July 2016).

If modules are being built on an outdated (and therefore likely insecure) OS then there's a non-negligible risk of supply-chain attack. Compromise of the build box would allow an adversary to modify these modules "at source" rather than needing to go through the relative hassle of a MiTM.

Auth Tokens

As noted in the introduction, I've replaced all auth tokens in this write up with a placeholder like [my_token].

This wasn't done without reason - at time of writing (2 weeks later) I can still run the exact same curl to retrieve files as the tokens represented by [new_token] and [sync_auth_token] remain valid (despite my trial having expired). This is probably pretty harmless, but doesn't contribute towards a good impression.

Disclosure

I emailed my concerns through to Cynet's SOC upon discovery, and was told they were looking at implementing improvements. I've waited a couple of weeks since that discussion on publishing, although the issues are sufficiently basic that they'd be discovered almost immediately by anyone targeting the product.

Conclusion

There are essentially two components to a SSL connection, and one cannot function effectively without the other. The most obvious, in-flight encryption, prevents an observer from seeing (or injecting) payloads.

The other component, though, is verification.

We use the idea of a trusted certificate to verify that we've actually connected to an authorised server before we even begin to negotiate a key to use in for our in-flight encryption. With this verification disabled, user-agents become extremely promiscuous and will negotiate keys (and send data to) with anyone who offers - particularly problematic when an adversary jumps in front of the authorised servers and offers up their own cert (knowing it won't be checked). There's absolutely no benefit to in-flight encryption if our user-agent is willing to send payloads directly to that adversary, encrypted using a key negotiated with them.

Certificate Authorities are not without their issues, but there's absolutely no benefit to a TLS connection if you're not verifying that you've connected to the right servers. Even if a public CA isn't used, certificates should still be validated against a known (and well controlled) trust store so that end points are actually authenticated.

Not to do so in something running (and receiving commands) as root really is a quite unforgivable oversight.

A quick check of the binary with strings suggests that, under the hood, the curl library is being used to establish connections to C&C. curl verifies certificates by default, which suggests that validation has been explicitly disabled by passing the appropriate CURLOPT.

If you look at what Cynet offer on their platform, it's clear that there's been some real thought put into the feature set, along with some effort into developing their USP - "the world's first autonomous breach protection platform".

Unfortunately, the reality is that the product as it stands opens up a whole new attack surface - one which could and should have been avoided, but gives an adversary the ability to fairly trivially run privileged commands with no log to show it ever happened. That's a massive confidence knock for any product.

In this case, it really does seems like the Remedy may be worse than the Disease.