Why I won't have an Amazon Echo

I was recently asked to explain in a bit more depth why I'm not willing to have an Amazon Echo (or, more specifically - Alexa), so I thought I'd write an answer down too.

Although the question was specifically about Alexa (being the best-known), the answer applies to alternatives like Google Home (now Google Assistant), Microsoft's Cortana and Sonos One.

Although the technology is now quite old, voice activated virtual assistants really are a cool bit of tech, I'm not disputing that for a second. In fact, in some ways I'd like nothing better than to hack an OBD-II connector onto an echo, install it in the car and live a Nightrider future.

But, as cool as these things are in principle, they are by design an always listening microphone that you're willingly installing into your home. For the reasons I'll lay out below, that's not a small deal.

Trust

These things are literally designed to send recordings of your voice back to Amazon's servers - it's essential in order for them to be able to work.

What Amazon then do with those recordings is entirely up to them - although they limit their activities in Terms and Conditions, you have to trust that they'll actually abide by them. If not, the damage may be irrevocable. For example, you can email me nudes of and I promise I'll look at them once and delete them. However, if I then upload those nudes onto the web, there's nothing you can do to undo that. You can sue me for breach of contract (and collect lots of money), but your nudes are still out in the wild. The point here, is that if your only protection is someone else's promise, then you should consider that you are, for all intents and purposes, still exposed to the risk - the only protection you've gained is the ability to blame someone else.

There definitely seems to be an intent to use this technology too, as Amazon have patented the concept of using voice pattern analysis in order to identify your mood and the play adverts tailored to that - for example, trying to sell you medication if you sound depressed.

Given that Amazon appear to have failed even to avoid the use of child labour in building the Echo it's rather hard to trust any promises they may make, particularly about what they may or may not do in the future.

Bugs and Odd Behaviour

However, there's more to this than the trust that Alexa asks for. After all, there must already be some trust in place if you shop with Amazon (particularly if you're buying anything even slightly.... odd).

The problem is, it's not just deliberate breaches that you need to consider. Software bugs are a fact of life, and Alexa is no stranger to them, and the consequences can range from annoying to outright humiliating.

Here are just a few of my favourite:

- Being charged 500Euros by the Police because Alexa held an all night rave while you were out,

- Ordering cat food because an advert told it too

- Ordering dollhouses because of a news report about Alexa accidentally ordering dollhouses

- Randomly laughing in the middle of the night

- Alexa Gone Wild - Suggesting porn to kids

- Alexa Skills being used for always on recordings

- sending recordings of a private conversation with your spouse (lucky it was just about flooring) to one of your employees as a result of an extremely unlikely string of events.

- Amazon accidentally send 1,700 voice recordings to wrong user after GDPR request

- Google Assistant records when it shouldn't, then those recordings get leaked

- Microsoft's Cortana Reviewers hear phone sex, full addresses and pornography searches.

- The devices are, at best, just a novel way for developers to make the same simple security mistakes.

- 2020: Echo's will now share access to your wifi with randomers near your house

The list goes on, and realistically, is just going to continue to grow. Some are just creepy (like Alexa laughing in the night), but Alexa "accidentally" sending a recording you didn't even know was being made to someone in your contact list? Or Google recording "bedroom chatter" and then losing control of those recordings?

Of course, you can set Alexa to require a button to be pushed before she'll listen, but as Google Home showed, sometimes manufacturing defects result in the opposite effect - a box that is listening and sending the whole times. Update: In fact, as time has progressed, it seems the idea of only listening for a short-time is increasingly coming under attack.

Ultimately, even if you trust Amazon not to deliberately abuse that trust, there's every possibility that they may inadvertently do so. Once something's happened, it's very hard (if not impossible) to take it back, and things spread very quickly in the modern age.

It's not even like it's just Amazon you need to trust. These things are voice activated, all you need to control them is the ability to play audio, and it doesn't even need to be audible to humans. So in the list of people you have to trust, there's every advertiser on TV, every youtube publisher, every Spotify playlist. Essentially, anyone who creates anything you might playback or watch in hearing range of Alexa.

Because of my background, I tend to look for failure modes, and I can see far more than I'm comfortable with.

Where's the harm?

Through all of this, you may have been thinking "but it's just a recording of my voice, why would I care?". It matters more than you may immediately think, it's not just the sound of your voice (that matters too) but also the content.

In one of the example issues above, Amazon's explanation was as follows

The Echo woke up due to a word in background conversation sounding like “Alexa.” Then, the subsequent conversation was heard as a “send message” request. At which point, Alexa said out loud “To whom?” At which point, the background conversation was interpreted as a name in the customers contact list. Alexa then asked out loud, “[contact name], right?” Alexa then interpreted background conversation as “right.” As unlikely as this string of events is, we are evaluating options to make this case even less likely.

The couple in question were in another room, talking about hardwood floors. Somehow in that conversation, Alexa managed to interpret all the commands necessary to send the recording to one of the Husband's employees (using his contact list). That's an incredibly unlikely string of events, yet it happened. The law of large numbers tells us something similar will happen again one day.

Now, ask yourself, what if they hadn't been talking about hardwood floors? What if they'd been talking about BDSM or something much more private? What if they'd been fucking on the sofa? Would you be happy with that being emailed to someone in your contact list? In this instance, the employee phoned them and warned them, but would all employees be as considerate (particularly with potential blackmail material).

In reality, would it be the end of the world if a recording surface of me grunting for a few minutes and then asking for a sandwich? Probably not, but it's still something I (and I assume most people) would like to avoid, so the benefits from Alexa need to outweigh that risk, and I just don't see any aside from the cool factor.

More concerningly, though, embarrassment isn't the biggest risk here.

As worthless/unimportant as you might think a recording of your voice is, your voice can be used to identify you. The Taxman uses Voice Pattern Analysis as an authentication mechanism (they call it VoiceID) when you phone them. It's a patently fucking stupid idea to use any form of biometrics for authentication rather than identification, but it is unfortunately common. HMRC are not the first to use it, and they absolutely won't be the last.

Those unimportant recordings of your voice can be used in order to analyse your voice pattern and synthesise your voice saying the appropriate unlock phrases (none of which are secret). All that's needed is 1 minute of audio.

If you want to raise the paranoia level a little more and start invoking deep state theories, you should also consider this. Voice recordings are considered to be strong evidence, but with the appropriate input information available it's now possible (almost at a consumer level) to synthesis anyone saying anything.

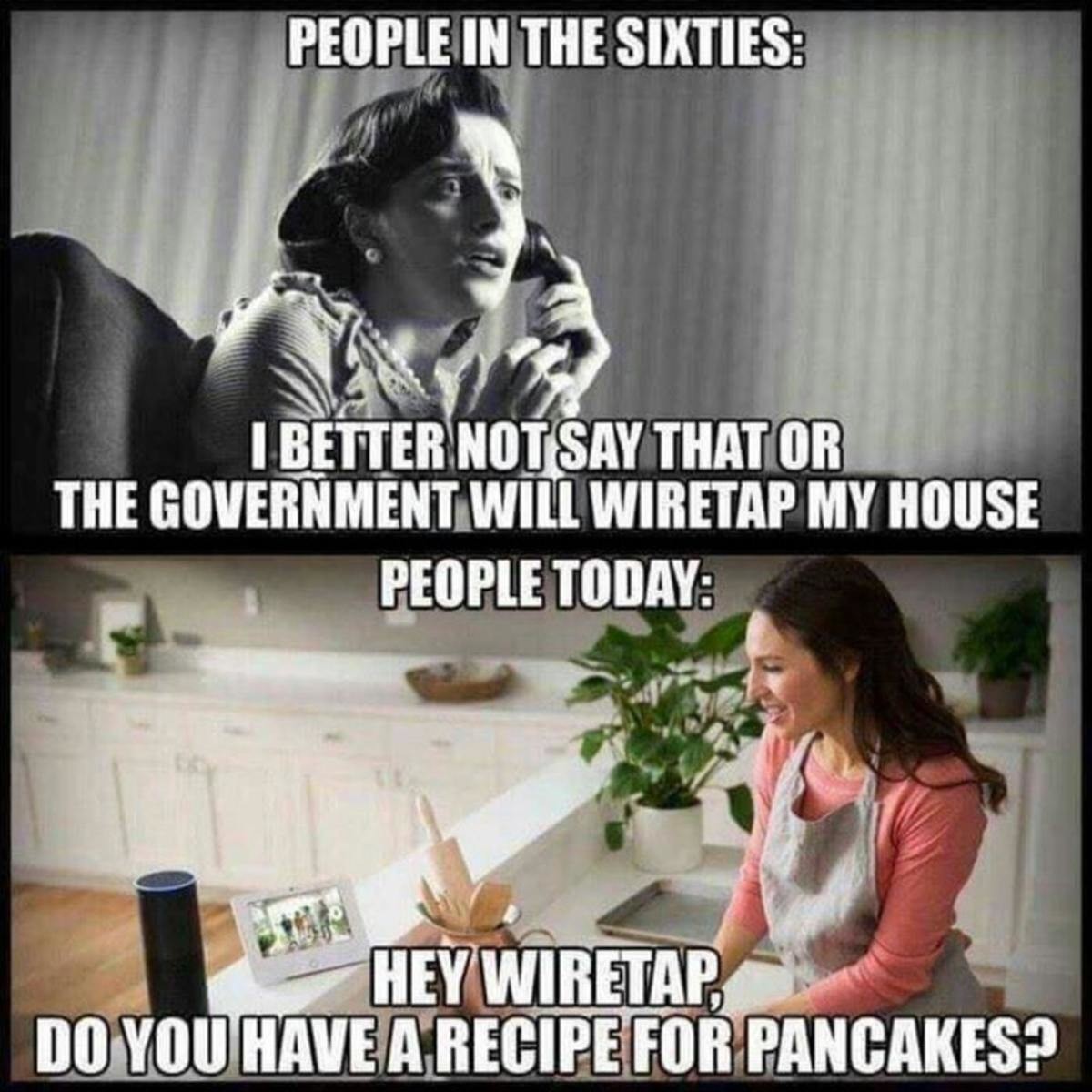

The Wiretap can become a Wiretap

In our current society, this is (hopefully) unlikely to affect most people, but should still be considered - especially given that power and governmental structures will change many times within our lifetimes.

We saw above that these devices can accidentally become 'always on'. but it's also possible for them to become deliberately so, and not just as a result of malicious third-party developers (who Amazon can at least identify and block).

By installing an Echo, you're putting an internet connected microphone into your house, under the control of a third party (Amazon). That third party needs to comply with local laws and court orders, and so may be ordered to discreetly switch Alexa to an always-on mode.

In today's society, you might hope that that'd only happen to people who should legitimately be of interest, but, you do not know what tomorrow's society and government will be like. They may prefer to abuse the tools you've made available to them, given the power and leverage it provides. Given that the police hierarchy once seemed to think that undercover police impregnating activists was acceptable, it would be unwise to assume that any of your social or moral values are also held by any of those in power, now or in the future.

We're living in a post-Snowden world, we know that the Intelligence Services have been playing incredibly fast and loose with privacy, and slurping up anything and everything they can lay their hands on. Although Industry has moved to make this harder for them, it doesn't seem like it's a wise idea to continue unnecessarily generating sensitive data for them, particularly as the private approach and attitudes of those at the top almost certainly hasn't changed at all (as evidenced by the continued calls to fatally undermine cryptography by inserting "magic" backdoors).

In the US, Third Party Doctrine holds that people who voluntarily give information to third parties have "no reasonable expectation of Privacy". It's already been largely agreed that this extends to recordings of your voice if you install an echo (though it's unsettled as to whether it applies to those who are not the device owner). Recordings from Alexa have already been used in a murder trial in the US (though the case was subsequently dropped).

In the UK, we're still not much better off. You do not even need to be specifically targetted, thanks to the Investigatory Powers Act, the Government can use what they call Bulk Equipment Interference powers - everyone else would call that mass indiscriminate hacking - in order to compromise devices on a wide scale if they feel they can justify it (to themselves, not to the taxpayer).

Even without Amazon's co-operation though, there's still another issue. In a room with an Echo, it's fully expected that there'll be a device with a microphone - the echo itself. What happens, though, if someone has opened that device up and modified it to also send them audio recordings? Would you ever know? There are no unexpected bugs to find.

I'm not (I hope) of any real interest to the Government, and hopefully, it'll always remain that way. But, if that ever does change, they can damn well put the effort in to bug the house properly, I'm not going to be doing it for them.

Conclusion

I've likely skipped over more than a few things, but the root of my objection to Amazon Echo and other Voice Activated Virtual Assistants is that the privacy implications simply aren't outweighed by the benefits. What is the true benefit of Alexa once the novelty has worn off? Why does everyone want me to talk to my device? I was always taught that talking to my computers would make people think I was crazy.

In my mind, the trust equation doesn't even come close to balancing, in order to feel the risks are sufficiently mitigated there's a huge pool of people you need to trust, as well as trusting that Amazon won't accidentally introduce bugs into the firmware. Just one slip-up, combined with idiotic behaviour by an organisation like HMRC and you're open to having a potentially very uncomfortable time.

There are, of course, people who will feel the utility of Alexa outweighs the potential drawbacks, and that's fine, it is after all, a personal risk assessment that you have to make. I see too much potential harm for no defensible purpose, and that's before someone like me walks into your house.

And in case you're wondering, yes, the same objections apply to phone based "assistants". In many ways, all the more so as you're often then carrying that wiretap around with you everywhere you go. Keeping in mind the issues that have been discussed above, it should come as a bit of a concern that Gavin Williamson, the British Defence Minister seems to have set Siri to always listen.